C++ smart pointers rely on the ability to overload

operator-> (and, less importantly,

operator->*), whose invocation precedes any access to the pointee. Unfortunately, there is no such thing as overloading

operator. (even though the possibility to do so has been considered by Bjarne Stroustrup and

others), which would allow for the creation of "smart reference" classes. Lacking this ability, a wrapper class has to go through the painful and non-generic process of replicating the interface of the wrapped object. We present here an approach to class interface design, let's call it

slot-based interfaces, that allows for easy wrapping without losing much expressivity with respect to traditional C++ interfaces. The techniques described are exercised by a program

available for download (a fairly C++11-compliant compiler such as GCC 4.8 is needed).

Say we have a container class like the following:

template <class T, class Allocator = std::allocator<T>>

class vector {

...

iterator begin();

const_iterator begin()const;

iterator end();

const_iterator end()const;

...

template <class... Args>

void emplace_back(Args&&... args);

void push_back(const T& x);

...

};

The interface of this vector can be changed to one based in slots by replacing each member function with an equivalent overload of operator():

template <class T, class Allocator = std::allocator<T>>

class vector {

...

iterator operator()(begin_s);

const_iterator operator()(begin_s)const;

iterator operator()(end_s);

const_iterator operator()(end_s)const;

...

template <class... Args>

void operator()(emplace_back_s,Args&&... args);

void operator()(push_back_s,const T& x);

...

};

where begin_s, end_s, emplace_back_s, etc. are arbitrary, different types called slots, of each of which there is a corresponding global instance with name begin, end, emplace_back, etc. Traditional code such as

vector<int> c;

for(int i=0;i<10;++i){

c.push_back(2*i);

c.emplace_back(3*i);

}

for(auto it=c.begin(),it_end=c.end();it!=it_end;++it){

std::cout<<*it<<" ";

}

looks like the following when using a slot-based interface:

vector<int> c;

for(int i=0;i<10;++i){

c(push_back,2*i);

c(emplace_back,3*i);

}

for(auto it=c(begin),it_end=c(end);it!=it_end;++it){

std::cout<<*it<<" ";

}

Legibility is still excelent, if a little odd at the beginning. The big advantage of a slot-based interface is that all of it is mediated through invocations of (overloads of) operator(), so wrapping, with the aid of C++11 perfect forwarding, is an extremely simple business:

template <typename T>

class logger

{

T t;

public:

template<typename ...Args>

logger(Args&&... args):t(std::forward<Args>(args)...){}

template<typename Q,typename ...Args>

auto operator()(Q&& q,Args&&... args)

->decltype(t(std::forward<Q>(q),std::forward<Args>(args)...))

{

std::cout<<typeid(q).name()<<std::endl;

return t(std::forward<Q>(q),std::forward<Args>(args)...);

}

template<typename Q,typename ...Args>

auto operator()(Q&& q,Args&&... args)const

->decltype(t(std::forward<Q>(q),std::forward<Args>(args)...))

{

std::cout<<typeid(q).name()<<std::endl;

return t(std::forward<Q>(q),std::forward<Args>(args)...);

}

};

logger<T> merely adorns the interface of T (which is, remember, entirely comprised of overloads of operator()) by registering the name of the first argument type, i.e. the slot used. Substituting logger<T> by T in any piece of code works immediately without further modifications, regardless of the interface of T (provided, of course, it is slot-based). The following is probably more interesting:

template <typename T>

class shared_ref

{

std::shared_ptr<T> p;

public:

template<typename ...Args>

shared_ref(Args&&... args):p(std::forward<Args>(args)...){}

template<typename ...Args>

auto operator()(Args&&... args)

->decltype((*p)(std::forward<Args>(args)...))

{

return (*p)(std::forward<Args>(args)...);

}

};

Much as shared_ptr<T> acts as regular T*, shared_ref<T> acts as a regular T& whose (slot-based) interface can be unencumberedly used. This even works with run-time polymorphism based on virtual overloads of operator().

We can continue expanding on this new interface paradigm. So far slots have been regarded as passive types whose only mission is to adorn interfaces with convenient names, but nothing stops us from making these slots active classes in a curiously useful way:

struct begin_s

{

template<typename T,typename ...Args>

auto operator()(T&& t,Args&&... args)const

->decltype(t(*this,std::forward<Args>(args)...))

{

return t(*this,std::forward<Args>(args)...);

}

};

extern begin_s begin;

We have defined begin_s as a functor performing the following transformation:

begin(t,...) → t(begin,...),

which allows us to write (assuming all the slots are defined in an equivalent way) code like this:

vector<int> c;

for(int i=0;i<10;++i){

push_back(c,2*i);

emplace_back(c,3*i);

}

for(auto it=begin(c),it_end=end(c);it!=it_end;++it){

std::cout<<*it<<" ";

}

So, slots give us for free the additional possibility to use them with global function syntax. In fact, we can take this behavior as the very definition of a slot.

Definition. A basic slot is a functor S resolving each invocation of s(t,...) to an invocation of t(s,...), for any s of type S.

Those readers with a mathematical inclination will have detected a nice duality pattern going on here. On one hand, slot-based classes are functors whose overloads of operator() take slots as their first parameter, and on the other hand slots are functors whose first parameter is assumed to be a slot-based class type.

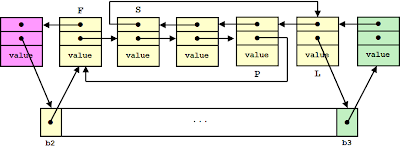

The diagram shows a number of different classes against several slot names, where succesful combinations are marked by a dot: in a sense it is immaterial which axis is considered to hold classes and which holds slots, as their roles can be reversed from a syntactical point of view. One unexpected implication of this duality is that slot-based class wrappers will also work as slot wrappers. For instance, much as we can write

// register access to the interface of c

logger<vector<int>> c;

...

we can also write

// register invocations to emplace_back

logger<emplace_back_s> logged_emplace_back;

logged_emplace_back(c,100);

...

Or, if we make vector open-ended by accepting any slot, we can use logged_emplace_back with member function syntax:

template <typename T>

class open_ended

{

T t;

public:

template<typename ...Args>

open_ended(Args&&... args):t(std::forward<Args>(args)...){}

template<typename Slot,typename ...Args>

auto operator()(Slot&& s,Args&&... args)

->decltype(s(t,std::forward<Args>(args)...))

{

return s(t,std::forward<Args>(args)...);

}

template<typename Slot,typename ...Args>

auto operator()(Slot&& s,Args&&... args)const

->decltype(s(t,std::forward<Args>(args)...))

{

return s(t,std::forward<Args>(args)...);

}

};

...

logger<emplace_back_s> logged_emplace_back;

open_ended<vector<int>> c;

c(logged_emplace_back,100);

...

By identifying class access with class invocation and reifying member function names into first-class functors, slot-based interfaces allow for a great deal of static composability and provide unified concepts blurring the distinction between classes and functions in ways that are of practical utility. The approach is not perfect: a non-standard syntax has to be resorted to, public inheritance needs

special provisions and operators (as opposed to regular member functions, i.e. functions like

operator=,

operator!, etc.) are not covered. With these limitations in mind, slot-based interfaces can come handy in specialized applications or frameworks requiring interface decoration, smart references or similar constructs.